As highlighted by Walter Frick in the HBR Guide to Critical Thinking, skilled decision-makers do more than just gather lots of data—they focus on predicting outcomes and carefully weighing what really matters. Most of us, though, have built-in blind spots: we tend to be too confident in our judgment, get locked into our own circumstances, and default to "yes or no" thinking when the world deals in likelihoods. This lesson introduces three everyday rules that you can use right away to sidestep those pitfalls—each one proven to help you make sharper, more resilient choices.

Overconfidence is everywhere, even among experts. This doesn’t mean your instincts are always wrong—but chances are, you’re more sure about your reasoning, predictions, and preferences than you should be.

The fix isn’t to become paralyzed with self-doubt. Instead, aim to align your confidence with actual probability. For any decision, ask yourself: If I was a little less sure, what else would I consider? Or How well am I prepared for things to turn out very differently? These questions are checks that help you avoid tunnel vision and uncover options you might have skipped.

Try taking it a step further: Once you’ve asked these questions, actually list other possible outcomes, risks, or explanations you might have missed if you weren’t so confident. For example, if you’re convinced your new initiative will succeed, spend five minutes brainstorming reasons it might fail—or how small changes could shift the odds. Imagine what precautions you'd take if you thought there was a real chance of being wrong. You’ll likely surface backup plans, different approaches, or new ways to test your assumptions—strengthening your decision before you lock it in.

This kind of deliberate “uncertainty check” is a quick way to make your confidence work for you, not against you. Over time, you’ll start catching the difference between healthy conviction and wishful certainty.

Most decisions are made with one eye on our unique context—what makes our team, company, or initiative “different.” But there’s powerful evidence that the best predictions start by looking at similar past cases (sometimes called taking the “outside view”).

If you’re launching a new product, ask: How often do products in this category actually succeed? Considering a job leap? What percentage of people who made a similar move were glad they did? These kinds of questions are about finding the base rate—a simple but powerful concept. A base rate is the typical outcome, frequency, or success rate for situations similar to yours. Instead of focusing only on what makes your situation unique (“the inside view”), the base rate asks: “What usually happens when people try this?”

To measure a base rate, look for reliable data or patterns from a broad set of cases like yours:

- Search for industry benchmarks or studies that track how often similar projects succeed.

- Ask colleagues, mentors, or teams about their past experiences in comparable situations.

- Use sources like market research reports, company case studies, or published statistics.

For example, before launching a new feature, you might learn that only 1 in 4 launches in your industry meet their targets. Or, before switching careers, you might discover that people in your network who made a similar move tend to feel satisfied (or regretful) after the fact. These base rates help anchor your expectations and call out risks you might ignore if you focused only on your specific plan.

Once you know the base rate for your decision, you can more realistically weigh your odds and adjust for anything that makes your situation truly unusual. This starting point isn’t “playing it safe”—it’s calibrating your judgment to reality before you get creative.

Let's see how this might look in practice when a people manager is planning a significant change:

- Milo: I'm really confident this new performance review process will boost engagement. Our team is ready for it.

- Victoria: That sounds promising. Have you looked at how often similar changes have worked at other companies?

- Milo: Not really—I've been focused on why it'll work for us specifically.

- Victoria: It might help to check the base rate. What percentage of performance review overhauls actually improve engagement?

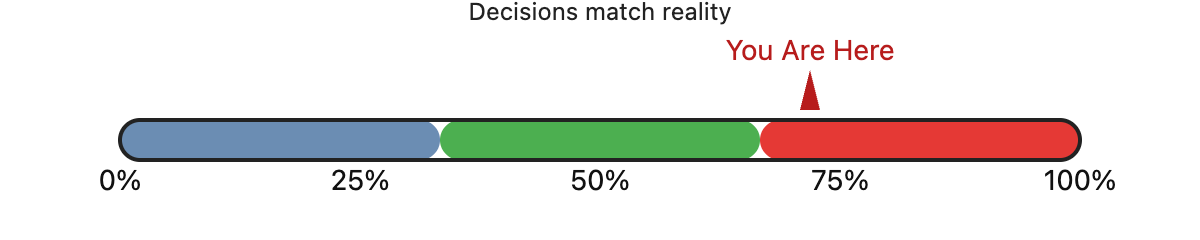

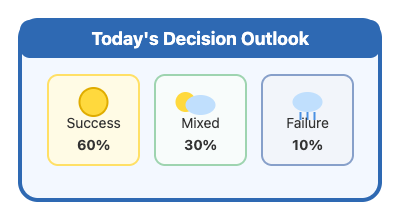

Most decisions at work aren’t certain. But we still act like they are, putting our chips on “yes” or “no” and trying to sound decisive. Thinking in probabilities is a habit that separates strong decision-makers from the rest. Even basic practice—like estimating how likely an outcome feels (in percentages or “odds”)—improves accuracy and exposes blind spots.

Imagine you're a meteorologist using probabilities to predict the weather. The more you use probabilistic thinking, the more natural it becomes to say, “I’d give this a 60% chance,” or to update your stance as you learn new information. You’ll find it easier to put a clear number—or even just a rough range—on your predictions, instead of relying on vague terms like “pretty sure” or “unlikely.”

As you practice, you’ll also get comfortable with adjusting your estimate when new evidence comes in. Instead of sticking to your first guess, you might say, “Earlier I thought success was about 60% likely, but based on this new data, I’d revise that down to 40%.” This isn’t fence-sitting—it’s a sign that your thinking is becoming more flexible, honest, and in tune with reality.

Together, these habits—calibrating your confidence, starting with base rates, and thinking in probabilities—turn decision-making into a repeatable skill instead of a guessing game. You’ll get a chance to try these approaches in the upcoming exercises. Bit by bit, these skills add up—helping you avoid overconfidence, see beyond your immediate context, and communicate risk like a pro.