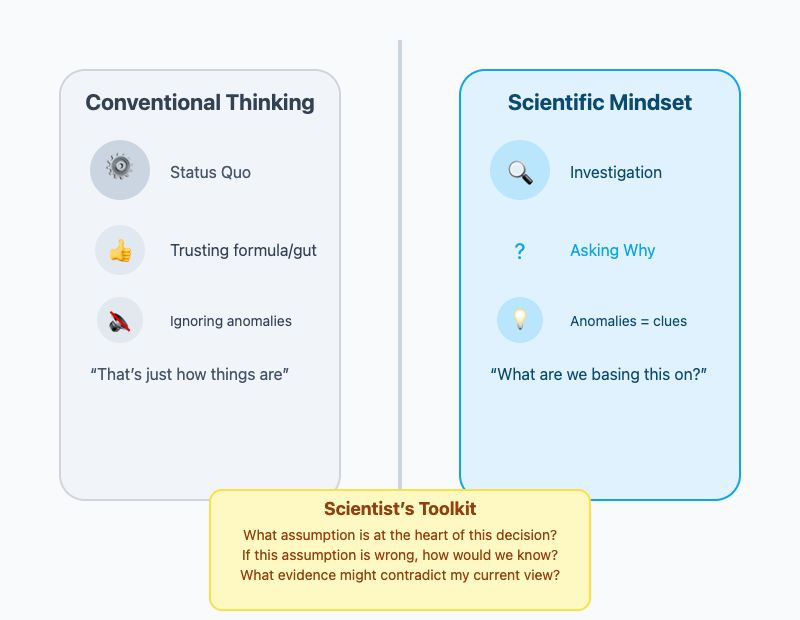

The best decisions start with questioning what you think you know. According to Stefan Thomke and Gary W. Loveman from the HBR Guide to Critical Thinking, acting like a scientist is about building intellectual humility and curiosity into your daily work. Scientists don’t settle for “that’s just how things are”, instead they use evidence to test their assumptions, stay alert for surprises, and remain open to changing course when the facts point in a new direction.

You might hear someone say, “I've always done it this way and it’s always worked.” But even proven formulas need a second look. Legendary leaders like Kazuo Hirai at Sony broke the cycle of assumption by asking, “What are we really basing this on?” When his team believed that selling more TVs was the solution, he dug into the numbers—and found out that volume alone couldn’t turn losses around. By switching the focus from gut feel to data, Hirai led Sony’s TV business back to profitability for the first time in over a decade.

To apply this thinking, pause before making a call and ask:

- What assumption is at the heart of this decision?

- If this assumption is wrong, how would we know?

- What evidence might contradict my current view?

Small anomalies are opportunities, not annoyances. A few unexpected complaints or a surprising result are clues to investigate, not just noise to ignore. Put simply: adopt informed skepticism, not cynicism. Instead of reflexively shooting down ideas, press for the “why” behind beliefs and look for real-world confirmation.

Having skepticism is necessary, but the next step is turning vague beliefs into sharp, testable statements. You’re not just arguing opinions—you’re discovering what’s actually true.

Suppose your team is facing a drop in collaboration and someone suggests that the new flexible work hours are to blame. Here’s how this belief can be transformed into a hypothesis:

- Chris: Since we started flexible work hours, our team collaboration has really dropped. Maybe we should go back.

- Meredith: What facts make you think the schedule is the cause?

- Chris: Projects have slowed since flexibility began.

- Meredith: Interesting. What else changed? Didn’t we lose two senior members at the same time?

- Chris: That’s true, but I still think flexibility is the problem.

- Meredith: How could we test it? Maybe track collaboration on teams with overlapping schedules versus those without.

Here, Meredith doesn’t settle for assumptions or opinions—she digs for evidence, uncovers other possible factors, and suggests designing an experiment:

“If we compare collaboration rates on teams with similar and different work schedules, we’ll see whether flexibility or another factor is having the most impact.”

Not every experiment will succeed, and that’s the point. Each result, good or bad, teaches you where your assumptions were right and where they missed. Even when a hypothesis fails, you’ve gained insight that makes your next move smarter. The real miss is clinging to guesses that go untested.

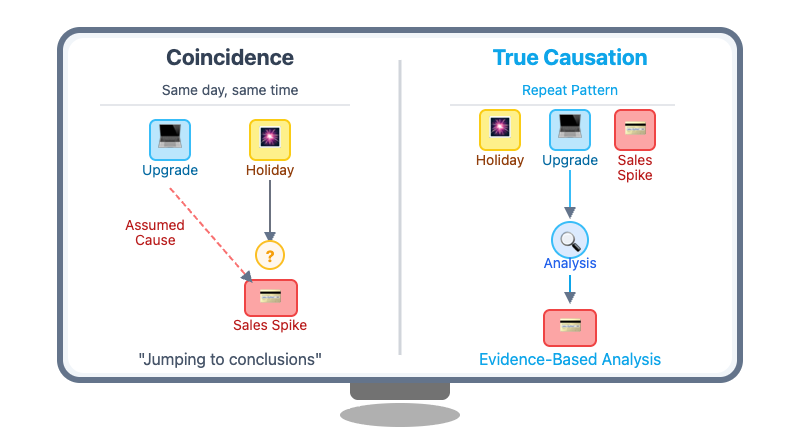

One of the most common mistakes is to assume that when two things happen at the same time, one must have caused the other. It’s easy to see a marketing campaign launch and a spike in sales, then credit the campaign for all of it. But what if sales were climbing anyway? True scientific thinking means asking, “What else could explain this?” and, “How would I rule those out?”

Try using counterfactual thinking: Would this outcome have happened without the change? Imagine a company upgrades its website on the same day a major holiday shopping season begins. Sales immediately spike, and the team credits the new website design for the surge. However, by comparing sales patterns from previous years, they realize that sales always jump during this season, regardless of changes to the website. By considering other possible explanations and looking at evidence across different time frames, the team avoids overstating the impact of their website changes and can make better-informed decisions about future investments.

To separate coincidence from cause, ask yourself:

- What other factors could explain what I’m seeing?

- What would I need to see to rule out other explanations?

This kind of thinking reduces costly mistakes—the type that come from chasing patterns that aren’t really there.

By acting like a scientist, you’ll learn to pause and question assumptions, turn beliefs into clear and testable hypotheses, and look for real evidence before making decisions. This approach helps you and your team avoid snap judgments, get to the root of problems, and make more effective, reliable calls. In the coming activities, you’ll practice steering discussions away from snap judgments and toward evidence, so you—and your team—can make more reliable calls.