Welcome to 2D Grids and Matrix Math. In this first lesson, we’ll take the next step beyond 1D array indexing and start thinking in rows and columns. The goal is practical: each CUDA thread will compute which matrix element it owns, then convert that 2D position into the correct location inside a linear memory buffer.

Up to this point, you may have primarily seen CUDA used for 1D operations, like adding two arrays. While 1D processing is a great way to learn the basics of parallel threads, real-world GPU workloads often revolve around linear algebra and matrix operations.

Matrices are the building blocks behind many of the most important areas in modern computing:

- Machine Learning & LLMs: Training and inference for models like GPT rely on massive matrix multiplies.

- Gaming & Graphics: Pixels and vertices are processed through coordinate transforms and shader math.

- Scientific Simulations: Weather, fluid dynamics, and physics simulations operate on grid-shaped data.

To work efficiently with data like this, we want to launch threads in a way that matches the problem’s shape.

A matrix isn’t just a long list of values. It has:

- a width (columns)

- a height (rows)

So each thread should ideally know two things:

- which column it belongs to

- which row it belongs to

This 2D way of thinking becomes especially important for matrix multiplication, image processing, stencil updates, and many other kernels where neighbors and tiles matter.

So far, we have mostly launched kernels in a way that looks 1D:

That’s perfectly valid, but under the hood CUDA launch dimensions are represented by a type called dim3, which has three components:

.x.y.z

So the launch above is effectively the same as:

The unused dimensions are not hiding extra threads—they simply default to 1.

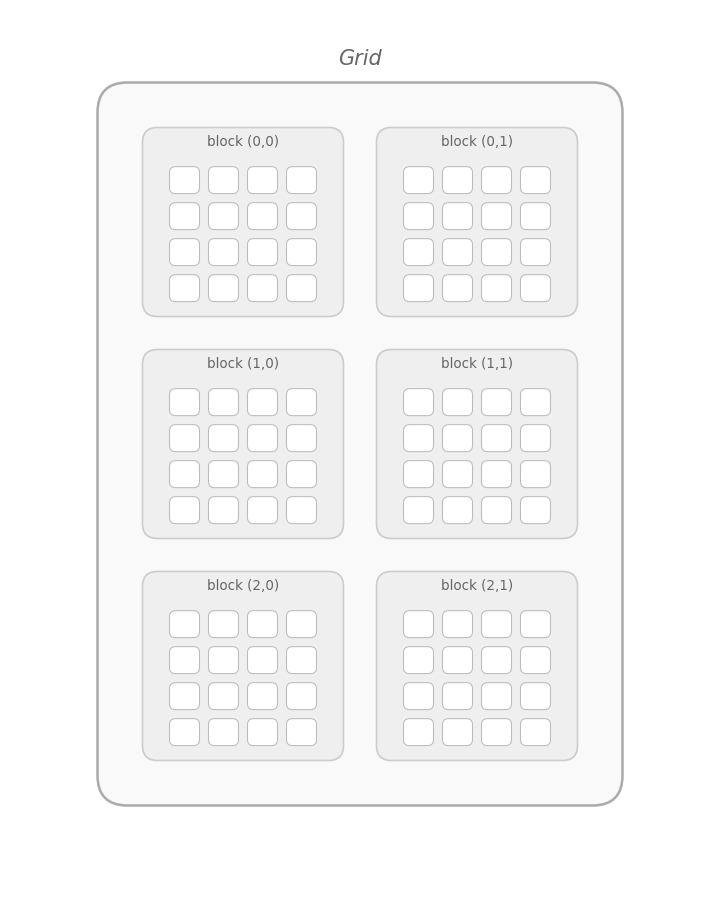

The same idea applies to blocks inside the grid. Blocks live inside a grid, and that name is literal: the launch shape can be arranged like a grid. Up to now, we’ve used a 1D grid, so we only needed .x.

That’s why earlier 1D kernels used indexing like this:

This works for flat arrays. But for matrices, it’s much more natural to use two axes:

.xfor columns.yfor rows

Before we define the kernel, we choose a matrix size, allocate memory, and decide how threads should be arranged.

For this example:

W = 4means 4 columnsH = 3means 3 rows- total elements =

W * H = 12

On the host, we store the matrix in a std::vector. On the device, we allocate a raw pointer with cudaMalloc.

The important new idea is the 2D block shape:

Instead of a flat 1D block, each block is now a small 2 x 2 tile of threads. We chose (2, 2) here specifically to fit our tiny matrix and demonstrate how multiple blocks are created. In professional code, you will typically see larger block sizes—such as or —to better utilize the hardware and align with (groups of 32 threads).

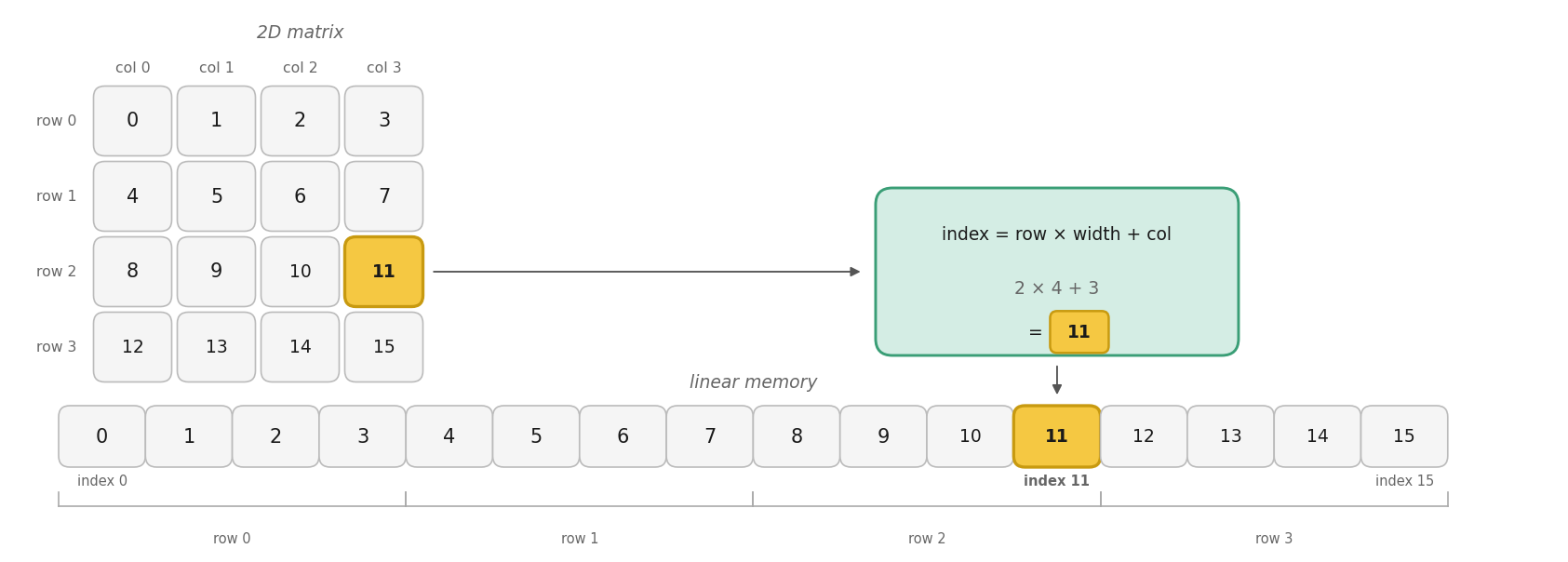

Even though a matrix looks two-dimensional, in memory it is typically stored as one long linear buffer. That means every thread must do two jobs:

- compute its 2D matrix position

- convert that position into a single linear index

For a matrix stored in row-major order, the mapping is:

This is the pattern we’ll use throughout the lesson:

- Compute

col - Compute

row - Convert them into

index

This is the bridge between CUDA’s 2D thread layout and the actual memory buffer on the .

Even if you launch with a “2D” dim3, the CUDA execution configuration is just a way of organizing threads—it does not magically turn your data into a real 2D matrix type.

The good news is that the grid, blocks, and threads really are multidimensional (depending on the dim3 values you choose). CUDA abstracts that for you by providing blockIdx.{x,y,z} and threadIdx.{x,y,z} so you don’t have to manually “flatten” thread IDs yourself.

Inside the kernel, however, your matrix is still just a float* pointing to a flat 1D buffer in linear memory. That means you still explicitly compute (col, row) from those multidimensional thread coordinates, and then convert that 2D position into a single linear index.

This distinction matters because memory layout (like row-major vs column-major) determines the correct mapping formula, not the launch shape. In other words, dim3 makes it easy to think in 2D for threads, but you are responsible for mapping that to the correct address in memory.

Note: The figure below demonstrates a grid of blocks. This configuration is different from our code's matrix and blocks, but it highlights how the grid organizes threads.

Now we can define the kernel itself. Each thread computes its global column and row, checks whether it’s inside the matrix, then writes to the correct linear position.

The indexing lines are the key:

blockIdx.xandthreadIdx.xlocate the thread across the grid (columns)blockIdx.yandthreadIdx.ylocate the thread down the grid (rows)

Together, they produce a global matrix coordinate.

The if check matters because the rounded-up grid may launch threads that fall outside the valid matrix area. Inside bounds, each thread stores its own linear index, which makes the mapping easy to verify.

With memory ready, the launch shape chosen, and the kernel defined, we can run it. After the launch, we check for launch errors, wait for the GPU to finish, copy the results back, and verify that every element matches the expected linear index.

A CUDA kernel launch is asynchronous, so cudaDeviceSynchronize() ensures the kernel has finished before we inspect results.

The validation loop is a simple but effective test: if our 2D-to-1D mapping is correct, element i should contain exactly i.

Finally, we print the data in matrix form, report whether the indexing worked, then release device memory and return a success code. The nested loops rebuild the 2D view on the host using the same row-major formula i * W + j.

The output is:

Reading across each row, we see the expected linear order from 0 to 11, confirming that each thread wrote to the correct element.

In this lesson, we expanded our view of CUDA launches from 1D to 2D using dim3. We prepared a 2D launch, computed global row and col values, converted them to a linear index using row * width + col, and verified that every matrix element landed in the correct place.

This pattern is foundational in CUDA because many GPU problems involve images, matrices, and other grid-shaped data. In the practice section ahead, you’ll reinforce this idea by writing and checking 2D indexing yourself.